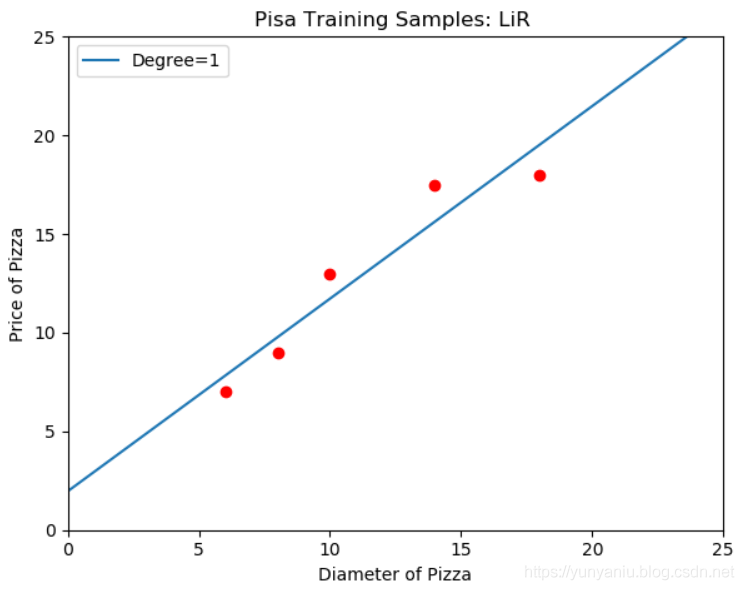

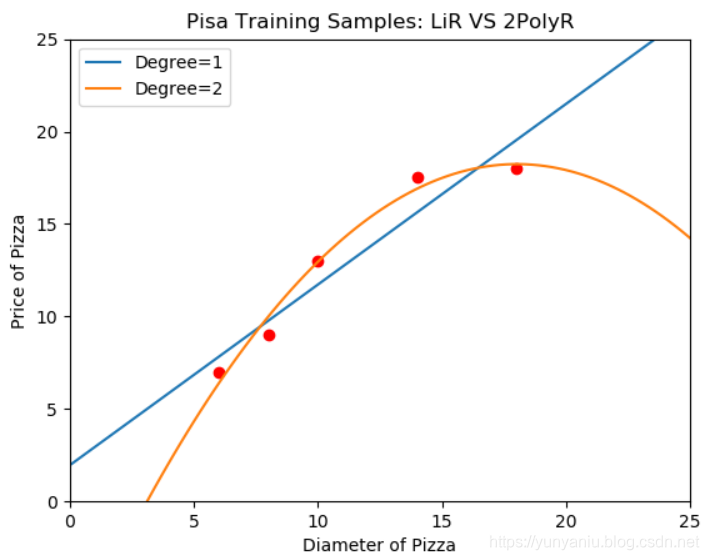

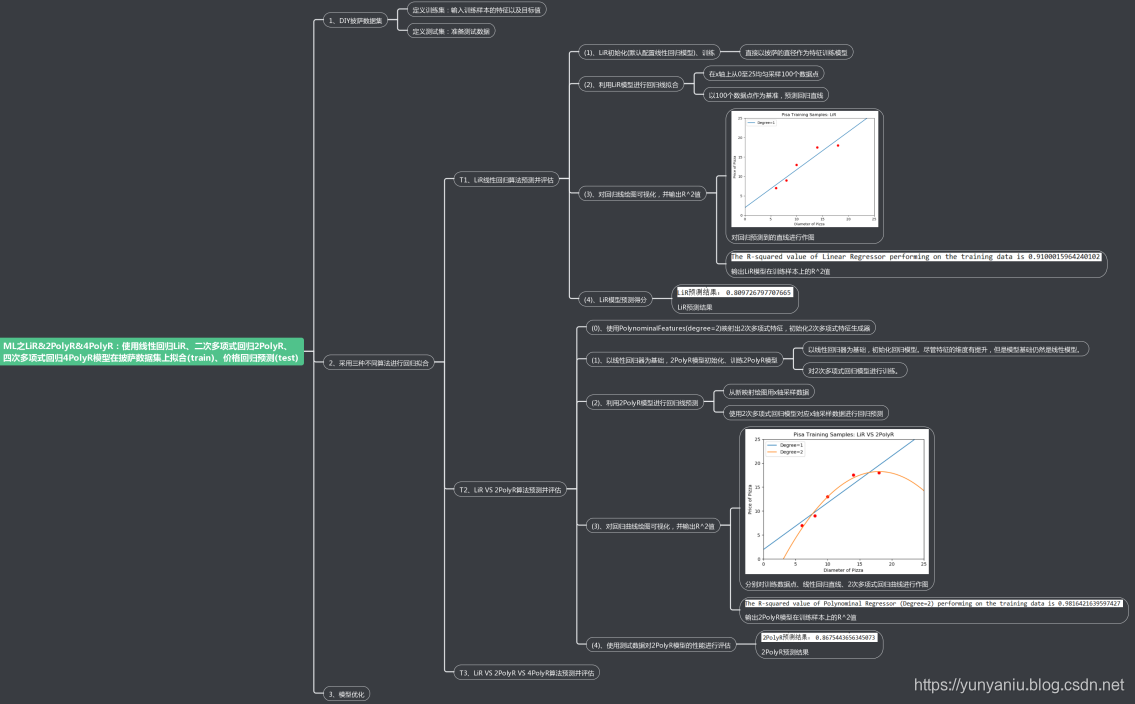

ML之LiR&2PolyR:使用线性回归LiR、二次多项式回归2PolyR模型在披萨数据集上拟合(train)、价格回归预测(test)

ML之LiR&2PolyR:使用线性回归LiR、二次多项式回归2PolyR模型在披萨数据集上拟合(train)、价格回归预测(test)

目录

输出结果

![]()

![]()

![]()

![]()

设计思路

核心代码

- poly2 = PolynomialFeatures(degree=2)

- X_train_poly2 = poly2.fit_transform(X_train)

-

- r_poly2 = LinearRegression()

- r_poly2.fit(X_train_poly2, y_train)

- poly2 = r_poly2.predict(xx_poly2)

- class PolynomialFeatures(BaseEstimator, TransformerMixin):

- """Generate polynomial and interaction features.

-

- Generate a new feature matrix consisting of all polynomial combinations

- of the features with degree less than or equal to the specified degree.

- For example, if an input sample is two dimensional and of the form

- [a, b], the degree-2 polynomial features are [1, a, b, a^2, ab, b^2].

-

- Parameters

- ----------

- degree : integer

- The degree of the polynomial features. Default = 2.

-

- interaction_only : boolean, default = False

- If true, only interaction features are produced: features that are

- products of at most ``degree`` *distinct* input features (so not

- ``x[1] ** 2``, ``x[0] * x[2] ** 3``, etc.).

-

- include_bias : boolean

- If True (default), then include a bias column, the feature in which

- all polynomial powers are zero (i.e. a column of ones - acts as an

- intercept term in a linear model).

-

- Examples

- --------

- >>> X = np.arange(6).reshape(3, 2)

- >>> X

- array([[0, 1],

- [2, 3],

- [4, 5]])

- >>> poly = PolynomialFeatures(2)

- >>> poly.fit_transform(X)

- array([[ 1., 0., 1., 0., 0., 1.],

- [ 1., 2., 3., 4., 6., 9.],

- [ 1., 4., 5., 16., 20., 25.]])

- >>> poly = PolynomialFeatures(interaction_only=True)

- >>> poly.fit_transform(X)

- array([[ 1., 0., 1., 0.],

- [ 1., 2., 3., 6.],

- [ 1., 4., 5., 20.]])

-

- Attributes

- ----------

- powers_ : array, shape (n_output_features, n_input_features)

- powers_[i, j] is the exponent of the jth input in the ith output.

-

- n_input_features_ : int

- The total number of input features.

-

- n_output_features_ : int

- The total number of polynomial output features. The number of output

- features is computed by iterating over all suitably sized combinations

- of input features.

-

- Notes

- -----

- Be aware that the number of features in the output array scales

- polynomially in the number of features of the input array, and

- exponentially in the degree. High degrees can cause overfitting.

-

- See :ref:`examples/linear_model/plot_polynomial_interpolation.py

- <sphx_glr_auto_examples_linear_model_plot_polynomial_interpolation.

- py>`

- """

- def __init__(self, degree=2, interaction_only=False, include_bias=True):

- self.degree = degree

- self.interaction_only = interaction_only

- self.include_bias = include_bias

-

- -meta"> @staticmethod

- def _combinations(n_features, degree, interaction_only, include_bias):

- comb = combinations if interaction_only else combinations_w_r

- start = int(not include_bias)

- return chain.from_iterable(comb(range(n_features), i) for

- i in range(start, degree + 1))

-

- -meta"> @property

- def powers_(self):

- check_is_fitted(self, 'n_input_features_')

- combinations = self._combinations(self.n_input_features_, self.

- degree,

- self.interaction_only,

- self.include_bias)

- return np.vstack(np.bincount(c, minlength=self.n_input_features_) for

- c in combinations)

-

- def get_feature_names(self, input_features=None):

- """

- Return feature names for output features

- Parameters

- ----------

- input_features : list of string, length n_features, optional

- String names for input features if available. By default,

- "x0", "x1", ... "xn_features" is used.

- Returns

- -------

- output_feature_names : list of string, length n_output_features

- """

- powers = self.powers_

- if input_features is None:

- input_features = ['x%d' % i for i in range(powers.shape[1])]

- feature_names = []

- for row in powers:

- inds = np.where(row)[0]

- if len(inds):

- name = " ".join(

- "%s^%d" % (input_features[ind], exp) if exp != 1 else

- input_features[ind] for

- (ind, exp) in zip(inds, row[inds]))

- else:

- name = "1"

- feature_names.append(name)

-

- return feature_names

-

- def fit(self, X, y=None):

- """

- Compute number of output features.

- Parameters

- ----------

- X : array-like, shape (n_samples, n_features)

- The data.

- Returns

- -------

- self : instance

- """

- n_samples, n_features = check_array(X).shape

- combinations = self._combinations(n_features, self.degree,

- self.interaction_only,

- self.include_bias)

- self.n_input_features_ = n_features

- self.n_output_features_ = sum(1 for _ in combinations)

- return self

-

- def transform(self, X):

- """Transform data to polynomial features

- Parameters

- ----------

- X : array-like, shape [n_samples, n_features]

- The data to transform, row by row.

- Returns

- -------

- XP : np.ndarray shape [n_samples, NP]

- The matrix of features, where NP is the number of polynomial

- features generated from the combination of inputs.

- """

- check_is_fitted(self, ['n_input_features_', 'n_output_features_'])

- X = check_array(X, dtype=FLOAT_DTYPES)

- n_samples, n_features = X.shape

- if n_features != self.n_input_features_:

- raise ValueError("X shape does not match training shape")

- allocate output data

- XP = np.empty((n_samples, self.n_output_features_), dtype=X.dtype)

- combinations = self._combinations(n_features, self.degree,

- self.interaction_only,

- self.include_bias)

- for i, c in enumerate(combinations):

- :i]XP[ = X[:c].prod(1)

-

- return XP

网站声明:如果转载,请联系本站管理员。否则一切后果自行承担。

赞同 0

评论 0 条

- 上周热门

- 如何使用 StarRocks 管理和优化数据湖中的数据? 2944

- 【软件正版化】软件正版化工作要点 2863

- 统信UOS试玩黑神话:悟空 2823

- 信刻光盘安全隔离与信息交换系统 2718

- 镜舟科技与中启乘数科技达成战略合作,共筑数据服务新生态 1251

- grub引导程序无法找到指定设备和分区 1217

- 华为全联接大会2024丨软通动力分论坛精彩议程抢先看! 163

- 点击报名 | 京东2025校招进校行程预告 162

- 2024海洋能源产业融合发展论坛暨博览会同期活动-海洋能源与数字化智能化论坛成功举办 160

- 华为纯血鸿蒙正式版9月底见!但Mate 70的内情还得接着挖... 157

- 本周热议

- 我的信创开放社区兼职赚钱历程 40

- 今天你签到了吗? 27

- 信创开放社区邀请他人注册的具体步骤如下 15

- 如何玩转信创开放社区—从小白进阶到专家 15

- 方德桌面操作系统 14

- 我有15积分有什么用? 13

- 用抖音玩法闯信创开放社区——用平台宣传企业产品服务 13

- 如何让你先人一步获得悬赏问题信息?(创作者必看) 12

- 2024中国信创产业发展大会暨中国信息科技创新与应用博览会 9

- 中央国家机关政府采购中心:应当将CPU、操作系统符合安全可靠测评要求纳入采购需求 8

热门标签更多