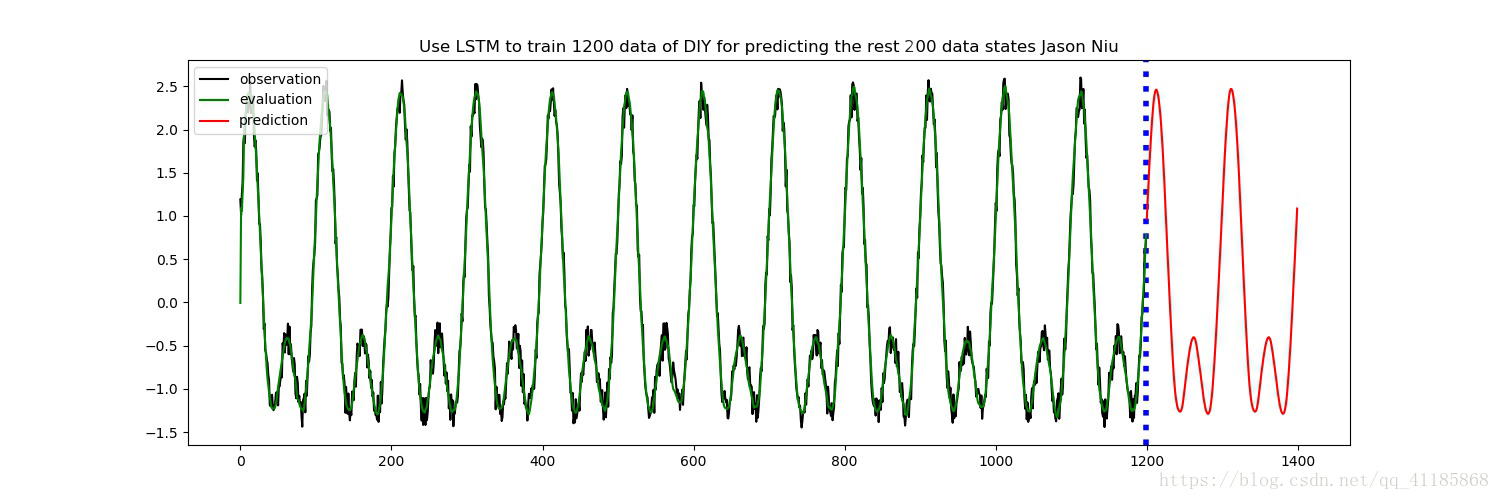

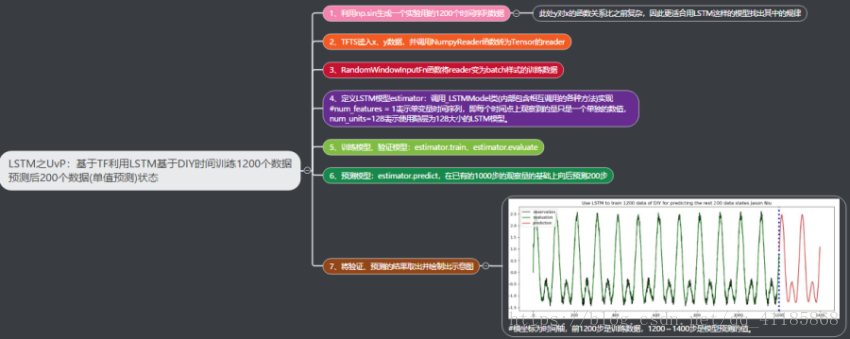

DL之LSTM之UvP:基于TF利用LSTM基于DIY时间训练1200个数据预测后200个数据状态

DL之LSTM之UvP:基于TF利用LSTM基于DIY时间训练1200个数据预测后200个数据状态

目录

输出结果

设计思路

训练记录全过程

- INFO:tensorflow:loss = 0.496935, step = 1

- INFO:tensorflow:global_step/sec: 7.44562

- INFO:tensorflow:loss = 0.0289763, step = 101 (13.432 sec)

- INFO:tensorflow:global_step/sec: 6.42037

- INFO:tensorflow:loss = 0.0200101, step = 201 (15.575 sec)

- INFO:tensorflow:global_step/sec: 5.56483

- INFO:tensorflow:loss = 0.0195363, step = 301 (17.971 sec)

- INFO:tensorflow:global_step/sec: 5.30867

- INFO:tensorflow:loss = 0.0141311, step = 401 (18.836 sec)

- INFO:tensorflow:global_step/sec: 5.41209

- INFO:tensorflow:loss = 0.014299, step = 501 (18.479 sec)

- INFO:tensorflow:global_step/sec: 4.92611

- INFO:tensorflow:loss = 0.0155927, step = 601 (20.298 sec)

- INFO:tensorflow:global_step/sec: 5.11247

- INFO:tensorflow:loss = 0.0130529, step = 701 (19.563 sec)

- INFO:tensorflow:global_step/sec: 4.71378

- INFO:tensorflow:loss = 0.0131998, step = 801 (21.211 sec)

- INFO:tensorflow:global_step/sec: 4.71155

- INFO:tensorflow:loss = 0.0143074, step = 901 (21.224 sec)

- INFO:tensorflow:global_step/sec: 5.07501

- INFO:tensorflow:loss = 0.0160928, step = 1001 (19.704 sec)

- INFO:tensorflow:global_step/sec: 4.85088

- INFO:tensorflow:loss = 0.00991265, step = 1101 (20.615 sec)

- INFO:tensorflow:global_step/sec: 4.93806

- INFO:tensorflow:loss = 0.0125441, step = 1201 (20.251 sec)

- INFO:tensorflow:global_step/sec: 5.40711

- INFO:tensorflow:loss = 0.0127672, step = 1301 (18.497 sec)

- INFO:tensorflow:global_step/sec: 4.92733

- INFO:tensorflow:loss = 0.0109727, step = 1401 (20.294 sec)

- INFO:tensorflow:global_step/sec: 4.42869

- INFO:tensorflow:loss = 0.0138402, step = 1501 (22.578 sec)

- INFO:tensorflow:global_step/sec: 4.902

- INFO:tensorflow:loss = 0.00974652, step = 1601 (20.401 sec)

- INFO:tensorflow:global_step/sec: 5.87293

- INFO:tensorflow:loss = 0.010258, step = 1701 (17.029 sec)

- INFO:tensorflow:global_step/sec: 5.88471

- INFO:tensorflow:loss = 0.0119193, step = 1801 (16.991 sec)

- INFO:tensorflow:global_step/sec: 5.89885

- INFO:tensorflow:loss = 0.0130985, step = 1901 (16.951 sec)

- INFO:tensorflow:Saving checkpoints for 2000 into C:\Users\----------\AppData\Local\Temp\tmpihfq7_j1\model.ckpt.

- INFO:tensorflow:Loss for final step: 0.0151946.

- INFO:tensorflow:Starting evaluation at 2018-10-17-02:30:52

- 2018-10-17 10:30:52.385626: I C:\tf_jenkins\home\workspace\rel-win\M\windows-gpu\PY\36\tensorflow\core\common_runtime\gpu\gpu_device.cc:1120] Creating TensorFlow device (/device:GPU:0) -> (device: 0, name: GeForce 940MX, pci bus id: 0000:01:00.0, compute capability: 5.0)

- INFO:tensorflow:Restoring parameters from C:\Users\------------\AppData\Local\Temp\tmpihfq7_j1\model.ckpt-2000

- INFO:tensorflow:Evaluation [1/1]

- INFO:tensorflow:Finished evaluation at 2018-10-17-02:30:54

- INFO:tensorflow:Saving dict for global step 2000: global_step = 2000, loss = 0.00669927, mean = [[[-0.00664094]

- [ 1.0939678 ]

- [ 1.05662227]

- ...,

- [ 0.36486277]

- [ 0.60855114]

- [ 0.78335267]]], observed = [[[ 1.19162858]

- [ 1.01151669]

- [ 1.2492404 ]

- ...,

- [ 0.58971846]

- [ 0.59429663]

- [ 0.75463229]]], start_tuple = (array([1199], dtype=int64), array([[ 0.49354312]], dtype=float32), [array([[ 2.80782163e-01, -2.79586822e-01, -1.27018601e-01,

- -1.37267247e-01, -9.66532946e-01, 1.77575842e-01,

- 2.53590047e-01, -1.71174794e-01, 3.26722264e-01,

- -1.53287530e-01, 3.41674328e-01, -2.82110858e+00,

- -8.25344026e-02, -6.24774337e-01, -1.03143930e-01,

- 1.69074144e-02, 5.53107023e-01, 6.09656096e-01,

- 2.15942681e-01, 2.38117671e+00, -4.35474813e-01,

- -8.18751156e-02, -8.21040720e-02, 5.50756678e-02,

- 3.19993496e-01, 4.17956561e-02, 3.75264943e-01,

- -4.79493916e-01, 6.70328498e-01, -2.11113644e+00,

- 7.29807839e-03, 4.77858186e-02, 6.35303259e-01,

- -1.65449232e-01, 7.43012428e-01, -3.35779548e-01,

- 1.10672331e+00, 8.13182443e-02, 3.63576859e-01,

- 2.60270387e-03, 1.08874297e+00, -1.23278670e-01,

- 4.51275796e-01, 1.38471827e-01, -2.19891405e+00,

- 4.55228150e-01, 3.76752585e-01, 4.18297976e-01,

- -1.29674762e-01, -5.27077794e-01, 2.10172087e-02,

- 9.18253139e-02, -4.42963123e-01, 8.60494375e-01,

- -1.56141710e+00, 6.13127127e-02, 1.06652260e+00,

- -1.64958403e-01, -2.49342889e-01, -3.20325941e-02,

- 6.25251114e-01, -9.56333756e-01, 6.22645095e-02,

- -1.85177767e+00, -1.18895024e-02, -1.25629926e+00,

- -8.09278548e-01, -1.56489462e-01, 4.20603305e-01,

- 1.36081472e-01, 4.73593265e-01, -7.08300769e-02,

- -9.10878852e-02, 2.92861044e-01, -1.19632289e-01,

- 6.10221215e-02, -4.23507988e-01, 1.39661419e+00,

- -3.00004274e-01, -2.10687280e-01, -1.49481639e-01,

- 3.21967512e-01, 2.97538459e-01, -1.35252133e-01,

- 1.09200977e-01, 1.85446128e-01, 3.46938014e-01,

- 2.08598793e-01, -3.52784902e-01, -2.46544376e-01,

- 7.78264701e-02, -3.51242304e-01, -3.57431412e-01,

- 3.66707861e-01, 1.21410508e-02, -8.59300196e-01,

- 4.11556125e-01, -6.82742074e-02, 1.10266757e+00,

- -4.94556457e-01, -5.72922267e-02, 3.00662041e-01,

- -1.90176621e-01, -6.88186646e-01, 1.37748182e-01,

- 3.30467284e-01, 6.39625788e-01, -5.39625525e-01,

- 3.10799032e-01, -1.74361169e-01, -1.03101039e+00,

- 1.62974745e-01, -4.43051122e-02, -8.31307888e-01,

- -8.95474315e-01, 1.87550467e-02, -2.91507039e-02,

- -2.40048468e-01, -4.92638528e-01, 1.22031212e+00,

- -1.03123677e+00, 1.15175478e-01, -4.30590212e-01,

- 3.07760298e-01, -2.37644076e+00, 6.80060592e-04,

- 1.45029235e+00, -1.63179412e-01]], dtype=float32), array([[ 1.56333834e-01, -8.78753215e-02, -4.04292978e-02,

- -5.39183915e-02, -4.08501059e-01, 6.91992342e-02,

- 1.21542148e-01, -7.25040585e-02, 1.23656943e-01,

- -6.08574860e-02, 1.73645392e-01, -5.46148360e-01,

- -3.08538955e-02, -2.52630085e-01, -4.92266566e-02,

- 6.05375459e-03, 2.12676853e-01, 2.12552696e-01,

- 9.57069546e-02, 6.01795316e-01, -1.65934741e-01,

- -2.18765661e-02, -2.14456674e-02, 2.04520095e-02,

- 1.51839703e-01, 1.74900144e-02, 1.79528132e-01,

- -1.59714103e-01, 2.98072249e-01, -5.45185566e-01,

- 2.36531044e-03, 1.51053490e-02, 3.07202935e-01,

- -5.08357324e-02, 2.96984106e-01, -1.09672762e-01,

- 5.08848071e-01, 3.89396697e-02, 1.46594375e-01,

- 5.78465813e-04, 4.44073975e-01, -5.69530763e-02,

- 1.92802429e-01, 4.73112799e-02, -5.95022798e-01,

- 1.91753775e-01, 1.71405807e-01, 1.87628955e-01,

- -5.02609573e-02, -2.27603659e-01, 8.66749138e-03,

- 3.60240042e-02, -2.11831197e-01, 4.81612295e-01,

- -4.55988973e-01, 2.93113627e-02, 4.87764150e-01,

- -6.91987425e-02, -1.01128876e-01, -1.40920663e-02,

- 2.75271446e-01, -4.79507029e-01, 2.70537268e-02,

- -4.76451099e-01, -4.29895753e-03, -4.62495953e-01,

- -3.31368059e-01, -7.12227523e-02, 2.01868847e-01,

- 5.63942678e-02, 1.58110172e-01, -1.71409473e-02,

- -3.63412760e-02, 1.35076761e-01, -4.54869717e-02,

- 1.97849423e-02, -2.08776698e-01, 5.99132776e-01,

- -9.71668810e-02, -8.88494924e-02, -5.91017641e-02,

- 1.46009699e-01, 1.49578944e-01, -6.66293278e-02,

- 4.50978763e-02, 5.90396933e-02, 1.33028805e-01,

- 7.05365539e-02, -1.45839781e-01, -9.20177996e-02,

- 3.21419686e-02, -1.38211146e-01, -1.18100852e-01,

- 2.14208573e-01, 4.81602084e-03, -3.42105478e-01,

- 1.84565291e-01, -2.92618871e-02, 3.88085902e-01,

- -2.48049170e-01, -2.79053152e-02, 1.52629077e-01,

- -6.13191538e-02, -3.08730781e-01, 4.58811522e-02,

- 1.47217959e-01, 2.71202058e-01, -2.18348011e-01,

- 1.24512725e-01, -5.67152649e-02, -5.15142381e-01,

- 5.49797714e-02, -1.24295102e-02, -4.49374735e-01,

- -3.59201699e-01, 7.46859424e-03, -1.10175423e-02,

- -1.09787628e-01, -2.06070423e-01, 3.97450179e-01,

- -3.43233734e-01, 2.82084029e-02, -2.50802487e-01,

- 1.35858551e-01, -7.15136528e-01, 1.41225886e-04,

- 3.52943033e-01, -5.17948419e-02]], dtype=float32)]), times = [[ 0 1 2 ..., 1197 1198 1199]]

网站声明:如果转载,请联系本站管理员。否则一切后果自行承担。

赞同 0

评论 0 条

- 上周热门

- 如何使用 StarRocks 管理和优化数据湖中的数据? 2944

- 【软件正版化】软件正版化工作要点 2863

- 统信UOS试玩黑神话:悟空 2823

- 信刻光盘安全隔离与信息交换系统 2717

- 镜舟科技与中启乘数科技达成战略合作,共筑数据服务新生态 1251

- grub引导程序无法找到指定设备和分区 1217

- 华为全联接大会2024丨软通动力分论坛精彩议程抢先看! 163

- 点击报名 | 京东2025校招进校行程预告 162

- 2024海洋能源产业融合发展论坛暨博览会同期活动-海洋能源与数字化智能化论坛成功举办 160

- 华为纯血鸿蒙正式版9月底见!但Mate 70的内情还得接着挖... 157

- 本周热议

- 我的信创开放社区兼职赚钱历程 40

- 今天你签到了吗? 27

- 信创开放社区邀请他人注册的具体步骤如下 15

- 如何玩转信创开放社区—从小白进阶到专家 15

- 方德桌面操作系统 14

- 我有15积分有什么用? 13

- 用抖音玩法闯信创开放社区——用平台宣传企业产品服务 13

- 如何让你先人一步获得悬赏问题信息?(创作者必看) 12

- 2024中国信创产业发展大会暨中国信息科技创新与应用博览会 9

- 中央国家机关政府采购中心:应当将CPU、操作系统符合安全可靠测评要求纳入采购需求 8

热门标签更多